Google’s removal of the num=100 parameter in September 2025 stirred significant discussion in the SEO community. This feature allowed SEO tools to append &num=100 to a Google search URL and receive the top 100 organic results on a single page instead of the usual 10.

Table of Contents

ToggleWhen it stopped working, some bloggers argued that the change was temporary or even good news for SEOs. Let’s explore it.

What Actually Changed

Around September 10, 2025, SEO tools reported that the &num=100 parameter no longer returned a 100‑result SERP, and on September 14, the industry confirmed that the feature was gone. Google later responded that “the use of this URL parameter is not something that we formally support.”

In other words, the removal was intentional rather than a bug or a glitch. The option to set the number of results via the search preferences interface had already been removed in 2018; however, the URL parameter continued to function until September 2025.

Before the change, a single request could return 100 results. After the update, collecting the same data required ten requests (using the &start parameter to paginate).

Performance Data Before and After Removal

Several SEO professionals have observed the effects of Google’s removal of the ‘num=100’ parameter, which previously allowed searchers and SEO tools to view up to 100 results on a single SERP. Based on early tests and shared case studies:

Crawling & Indexation: There have been little to no measurable changes in the crawl budget or indexation frequency. Google’s crawlers never relied on the num=100 parameter to decide what to crawl.

Rank Tracking: SEO platforms that used num=100 for bulk rank extraction had to adapt, but the rankings themselves showed no volatility attributed to this change.

User Signals: No broad shifts in impressions or CTR were associated with this change. The click distribution continues to concentrate heavily on the first 10 results.

Impact on Rank Tracking Tools and Pricing

The removal hit rank‑tracking tools particularly hard.

Infrastructure Cost: This update can affect the top SEO tools that once made one request per keyword and must now make up to ten, significantly increasing the server load and proxy bandwidth. Providers such as Keyword Insights have openly described it as a “10× increase in crawl load.” Many SEO experts have warned that subscription prices may increase or that the default keyword tracking depth may be reduced.

Reduced Visibility: Ahrefs explained that while their update frequency remains the same, the number of positions they can reliably track per keyword has decreased.

Broken Dashboards: Some rank‑tracking services experienced errors and temporary outages while rewriting their scraping routines. Users also reported encountering CAPTCHA and throttling issues when attempting to quickly collect a large number of results.

![]()

Impact on Google Search Console Data

Many SEOs initially assumed that the change would only affect third-party tools, but data from Google Search Console (GSC) soon revealed a surprising side effect:

Sharp drops in desktop impressions

Around the time the parameter was disabled, numerous GSC properties recorded steep declines in desktop impressions. The average position metrics also appeared to improve simultaneously.

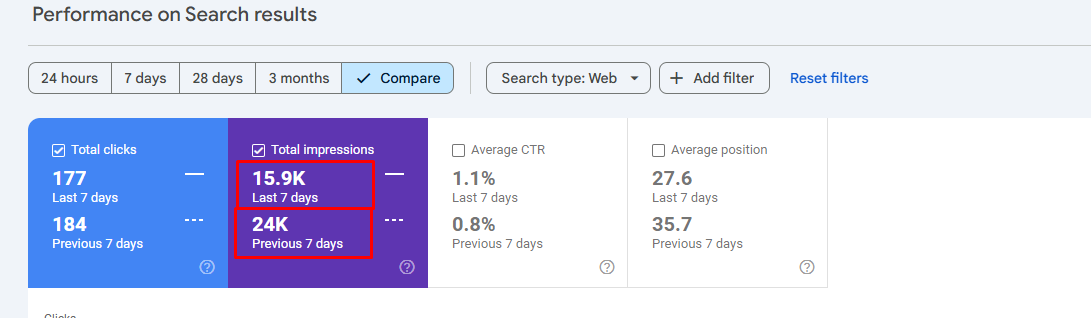

i.e. Lost Upto 10k impression on last 7 days:

Bot‑generated impressions removed

The prevailing theory is that bots loading 100‑result pages were generating impressions for positions 11‑100. An impression is counted whenever a URL appears on the current page, regardless of whether the user scrolls to it. With the parameter gone, bots no longer load deeper results, so the inflated impressions disappear.

Some bloggers claimed that the change did not impact Search Console data; this is incorrect because many sites saw significant drops in impressions and shifts in average position after the removal.

Furthermore, we will obtain accurate data in the coming days.

Possible Reasons for the Change

Google has not provided a detailed explanation for disabling &num=100, but SEO analysts have suggested several motives.

Limit scraping and defend against AI competitors:

This parameter removal coincides with Google’s broader strategy to combat AI-driven scraping. The change appears to be designed to reduce bulk data extraction by AI platforms such as ChatGPT and Perplexity, which use SERP APIs to analyze the top 100 results for their responses.

Data Quality Improvement:

The removal improves data accuracy. Many of those lost impressions were never viewed by real users, making current metrics more reflective of genuine user behavior

Is Removal “Good News” for SEOs?

Potential Benefits

Cleaner data and truer baselines:

Without bot‑inflated impressions, GSC metrics reflect human behavior more accurately. Year-on-year comparisons should note this baseline shift, but future reports may be more representative.

Encourages focus on the top results because most clicks occur on page 1, emphasizing the top 10–20 positions aligns better with user behavior.

Downsides and Challenges

Higher costs and reduced insight:

SEO tools now face a 10x increase in operational costs for the same data depth. This is likely to result in the following:

- Higher subscription prices

- Reduced data depth (tracking only the top 20 to 50 instead of top 100)

- Slower reporting cycles

Compromised historical comparisons:

Now impressions and average position metrics before and after September 2025 are not directly comparable.,

PERSONAL STRATEGIC INSIGHT: This change represents Google’s acknowledgment that AI platforms pose a legitimate threat to its search monopoly. By making bulk data extraction more expensive, Google is forcing its competitors to either invest heavily in infrastructure or accept limited data access. This levels the playing field for smaller SEO teams while simultaneously protecting Google’s data advantage.

FUTURE OUTLOOK: This change signals a broader shift toward Google controlling how search data are accessed and analyzed. SEO professionals should expect more restrictions on automated data collection and prepare for a future where official APIs become the primary data source

Additionally, SEOs should consider whether focusing on results beyond pages one or two still offers diminishing returns. With CTR for positions beyond 20 being close to negligible, this change may act as a reminder that optimization resources are best spent on top 10 visibility.